Most People Think AI Content Is Slop. It’s About To Become Caviar

According To A New Mental Model

The text, audio, and slides in this article were hand-designed and AI-crafted with my unique cognitive signature, voice, and style. If you’re interested in working with me one-on-one to create your own thought leadership system, respond to this post with “BLOCKBUSTER” via email or in the comments below.

MULTIMEDIA VERSIONS

Slides

Audio

FULL TEXT ARTICLE

When a Byzantine princess used one at her wedding in 1004, a clergyman denounced her for “excessive delicacy.” According to a historian, when she died of plague two years later, the Church called it divine punishment.

When Henry III of France used one in 1575, his courtiers sneered: “Of course you would. You dress like a woman.”

When an English traveler brought one back from Italy in 1608, his friends gave him a new nickname: Furcifer, a Latin insult referring to his new habit.

What were they all being mocked for?

Eating with a fork!

For 600 years, Europe’s elites dismissed the fork as a mark of vanity. Queen Elizabeth felt it was crude and preferred to eat with her fingers. Church leaders said it looked like the Devil’s pitchfork.

Every era found a new reason to reject it.

Yet today, billions of people use one every day without thinking.

The Thing You Mock Today May Be Your Biggest Opportunity Tomorrow

We see this stigma-to-success pattern throughout history:

Tattoos were a mark of sailors and convicts before kindergarten teachers and baristas made them mainstream.

Therapy was something you only admitted to if you were “in a dark place” before it was what every high-achiever scheduled.

Working from home was for slackers who couldn’t hack a real office before it was the default arrangement for the most productive knowledge workers in the economy.

Meditation was hippie-monk weirdness your coworkers would quietly mock before it was the Calm app every executive opens before a board meeting.

Each shift begins the same way. A small group tries the thing. The majority mocks them. Years pass. The mockers quietly start doing it too, denying they ever mocked it, and the practice gets a new respectable name.

The writing is always on the wall, and almost no one reads it.

If you’re a creator or entrepreneur, this pattern is particularly worth understanding. At a fundamental level, you create value by doing or saying things that are useful when others can’t, won’t, or haven’t.

However, to fully capitalize on the phenomenon, you need answers:

Why do some things go from stigma to success while others don’t?

What are the predictable steps in the stigma-to-success shift?

Where are there great opportunities that are overlooked simply because they’re temporarily stigmatized?

How can you time when the shift will happen?

This article answers all four questions and uncovers one of AI’s biggest opportunities in the process.

The Stigma-To-Success Shift Happens Especially Fast With AI

What took centuries for the fork only takes months or years with AI.

For example:

In 2022, ChatGPT for professional work was “a fun toy”, before it became table stakes in knowledge work. The people who treated it seriously that year are now years ahead of the ones who waited.

Two years ago, using AI for research was considered “lazy” and “unreliable” because “AI hallucinates.” The people who used it anyway, learning to verify as they went, are now years ahead of the skeptics.

Two years ago, using AI for hard conversations (grief, conflict, career decisions) was considered a “sad substitute for real connection.” Today, therapy is the #1 consumer use case of AI.

Right now, we are inside exactly such a moment with AI-generated content…

AI-Generated Content Is At The Inflection Point Right Now

Most people have a fixed mental model of AI-generated content:

“AI content is slop.”

“AI content will stay slop.”

“Anyone producing AI content is lazy.”

“AI content is contributing to the cultural garbage pile.”

They have receipts too:

LinkedIn flooded with robotic posts.

Amazon listings poisoned by AI-generated books citing nonexistent studies.

AI news sites hallucinating quotes from real people.

The slop is real. No argument there.

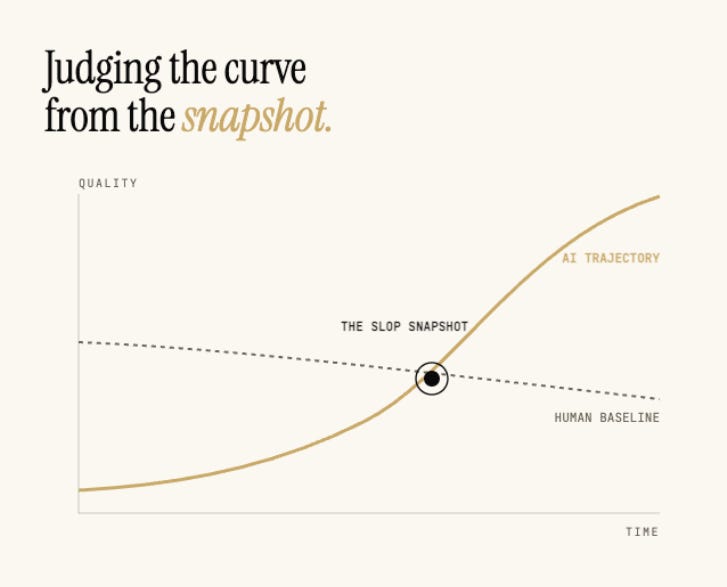

But a snapshot is not a trajectory...

The Handcraft Illusion: What you think is handcrafted probably isn’t

Every “AI is slop” argument rests on a silent comparison.

AI content. Artificial. Mechanical. Mass-produced. The word “artificial” is literally in the name.

Handcrafted human content. A real writer at a real desk, pulling sentences out of their own mind, each word chosen by hand.

This comparison has multiple hidden assumptions, and pulling on any one of them makes the slop argument collapse. Let’s start with the most obvious one.

How much of what you love is actually handcrafted?

By handcrafted, I mean made by a specific human, one at a time, using traditional tools, the way things were made before the Industrial Revolution. Not “artisan branded.” Actually made by a person’s hands.

Try this thought exercise:

Inventory the next room you’re in. Chair. Desk. Computer. Clothes. Cup. Light fixture. Flooring. Paint. Phone.

How much of what you are looking at is handcrafted?

For almost everyone reading this, the answer is close to zero. Maybe a ceramic mug. Maybe one painting. That is it.

This matters because the objection I hear most about AI content is some version of “I like handcrafted. I like knowing a human made this.”

The feeling is real.

The feeling also only governs about 4% of our actual buying behavior.

The other 96% shows up on your Visa statement. Mass-manufactured clothes from a global supply chain. A computer assembled by robots in Shenzhen. Cutlery stamped from sheet metal at four thousand units per hour. Even the local farm eggs came in packaging mass-produced in an Ohio factory.

Handcrafted objects are a story we tell about 4% of our possessions.

We think we live in a handcrafted world. We don’t. We think we prefer handcrafted. We mostly don’t.

This begs a question…

How does this apply to handcrafted human writing like articles, essays, videos, and posts that come from individual human minds?

Surely THAT’s different than handcrafted objects…

Human Content Has A Quality Problem Too

The AI slop argument assumes the human content is never slop.

Wrong.

Human content has been getting worse for at least a decade on multiple levels:

Creators learned to optimize for the algorithm, which rewarded engagement over depth. Clickbait, rage-bait, and addictive-but-hollow content were the result.

Ad supply exploded across the open internet. Advertising rates collapsed. News sites that once funded investigative reporting cut budgets, slashed fact-checkers, and replaced depth with volume.

The human content landscape did not just get diluted by amateur entrants. It got actively degraded by the business model that rewarded attention-capture over value.

When people point at LinkedIn and say “look at the AI slop,” they are pointing at a landscape that was already full of human slop. The robotic AI posts are replacing shallow think pieces, not replacing Malcolm Gladwell.

So, using human-created content as the baseline in a direct comparison has two problems. First, most of it is not handcrafted with the same care that everyone assumes. Second, most of it is often not good. Defending human-created slop against AI slop doesn’t fix anything.

Which leaves one real question:

What is the actual alternative to AI and human slop?

The Real Debate: Hand-Crafted vs Hand-Designed

Chef-branded restaurants at scale do not have the chef chopping every vegetable. The chef’s signature is in the system. Their taste, their judgment, their vision shape the experience, even though they didn’t touch most of the food. You accept that their fingerprint is in the design of your meal.

The same pattern is coming to content, and faster than most people think. Here is why.

The top non-fiction creators of the next decade are going to do something that was previously impossible:

Concretize what makes them special (their topic selection, their voice, their signature frameworks, their research process, their point of view) into an AI system.

Augment that special sauce using AI: stress-testing their ideas more ruthlessly than any human editor could, catching their own blindspots across hundreds of drafts, surfacing patterns across sources they would never have had time to read, verifying factual claims at machine speed.

Scale their output. First 2x. Then 10x. Then 100x and beyond.

The chef’s signature stays in every dish, because the system was designed to carry it. The taste, the judgment, the recognizable voice: all present, all amplified.

This is hand-designed content. Not handcrafted. The distinction is the whole argument. The creator’s fingerprints concentrate in the system rather than in the individual keystrokes, and the reader receives the benefit of their taste at a scale that was never reachable before.

Think of it this way.

Rather than the Internet being filled with nameless AI slop, it could be filled with more work from authors you love. Imagine if you got a Malcolm Gladwell, Michael Lewis, or Yuval Noah Harari book every year rather than every five years.

Why “AI Is Slop” Will Age Terribly

The Slop Fallacy is the move from “current AI content is mostly bad” to “AI content will always be mostly bad.” Critics made this same mistake with digital cameras in 1993, online dating in 2005, and electric cars in 2009. In every case, the assessment of the current state was correct. But the extrapolation from current state to future state was catastrophically wrong.

Tech investor Chris Dixon named this pattern in his 2010 essay The Next Big Thing Will Start Out Looking Like a Toy, building on Clayton Christensen’s The Innovator’s Dilemma. Important new technologies look like toys at launch because they can’t do what mainstream products already do well. Critics miss two things that compound:

The improvement curve is steeper than they can see from where they’re standing

The technology enables uses the existing products cannot reach.

Below are the four most important reasons among many that AI content will not stay slop:

#1. TRAJECTORY:

Today is the worst AI content will ever be

AI is improving faster than almost any technology in history. The improvement is not linear. It’s exponential. What today looks clunky, hallucinating, or generic is a snapshot of the launch state, not the trajectory. Current-state criticism misses where the curve is heading.

#2. PERSONALIZATION:

AI content can be personalized to you specifically

Human content has always been a compromise written for a fictional average reader who isn’t you. The writer aimed somewhere in the middle of their assumed audience, leaving you either patronized or lost.

AI content solves this via personalization on multiple levels:

Expertise. You ask about a new research paper and get an explanation pitched at your existing expertise.

Length. You’re in a rush and want the TLDR.

What You Don’t Know. You ask about a market trend and get the parts you don’t already know, not the introduction you don’t need.

Goal. You’re reading an article to see if it impacts an article you’re writing. AI extracts just what you need.

Medium. You prefer to learn with slides rather than text. AI can convert any article into audio, video, or slides.

The quality upgrade from personalization is bigger than critics register.

#3. NICHE ECONOMICS:

AI makes niche content economic for the first time

Vast demand exists for content that doesn’t exist yet because it is uneconomic for humans to produce:

Hyper-specific podcasts.

Tutorials for niche software.

Explainers of obscure research papers.

Travel guides for unusual cities.

News that no one else is covering.

Human creators cannot economically reach audiences of 20-2,000 readers. AI can, because it is so cheap to create. Furthermore, AI content won’t compete with human content in the niches. Rather, it will compete against nothing.

What’s also important here is that niche content has built-in demand. People are already searching for it, but there are no relevant results. So when AI content fills the hole, it will rise to the top and be discovered.

#4. RESEARCH DEPTH:

AI can read what humans can’t afford to read

Human journalism has always been throttled by a brutal constraint: a reporter can only cover what they have time to read. Vast troves of publicly-available information — SEC filings, regulatory rulings, court records, legislative text — get covered only at the surface, if at all. Bloomberg covers large-cap filings; nobody covers small-cap bankruptcies. The Times covers famous lawsuits; nobody reads the 3.5 million pages of court documents dumped in the Epstein case.

AI collapses the constraint.

One independent AI creator can do more research than an entire human-only newsroom when the research can be done by reading secondary sources.

The critics are comparing AI content to human coverage that was never going to exist in the first place.

Stack these reasons together, and you are no longer comparing AI content to the best human content. You are comparing personalized-AI-content-that-exists to broadcast-human-content-that-wasn’t-made-for-you-and-isn’t-very-good-anymore. And the number of reasons will keep stacking.

The current “no one wants AI content” consensus will age about as well as the “no one will ever meet their spouse online” consensus of 2003.

The Cultural Pattern Behind The Shift From Stigmatized To Successful

Here’s the mental model for how the culture shift actually works, the one that almost no one has.

This framework builds on three established ideas:

Everett Rogers’ Diffusion of Innovations (1962) mapped how new practices spread through populations in a predictable curve from innovators to late adopters.

Timur Kuran’s preference cascades, from his 1995 book Private Truths, Public Lies, explained why cultural shifts look sudden: people hide their real views under social pressure until a threshold breaks and everyone admits at once.

The Overton window, coined by political analyst Joseph Overton in the 1990s, described how ideas migrate through zones of acceptability, from “unthinkable” to “radical” to “popular” to “policy.”

The six pillars below are what those dynamics look like, specifically when the resistance is identity-linked stigma: