AI Scaling Law Part 2: Predict The Near-Future Of AI

Earlier this week I posted about Steve Jobs’ top mental model for thinking about the future whereby he:

Identified important, long-term trends

Extrapolated them into the future

And thought through the deep implications

I also shared nuances of how other innovators like Elon Musk, Ray Kurzweil, Sam Altman, and Kevin Kelly follow the same exact model.

The bottom line from the post is two-fold:

The technological future is much more predictable than we think.

If we want to prepare for a future of rapid change, we should follow Jobs’ model. Or as Sam Altman says, “When you are on an exponential curve, make the assumption that it’s going to keep on going.”

Finally, I applied this mental model to AI and shared the most important curve that will help you predict the near future of AI…

The AI Scaling Law

In short, the AI Scaling Law for LLMs (Large Language Models) is that performance increases as a function of three variables:

Compute

Data

Parameters

Another way to think of The AI Scaling Law is that it’s the modern version of Moore’s Law, except it’s much steeper. According to both Elon Musk and Sam Altman independently, the capability of AI models is currently increasing at a rate of 5-10x per year—faster than any other technology in history.

You only have to extrapolate this trend a few years to see how big of a deal this is:

If this trend continues, AI models in 2029 could be a whopping 100,000 times more capable than they are now.

What separates today’s AI pioneers is that they understood and bet billions on these scaling laws before everyone else. And they ended up being right.

Dario Amodei, former senior exec at OpenAI and co-founder of Anthropic (creator of Claude) states this in an interview at a recent Fortune conference:

Source: Fortune Conference

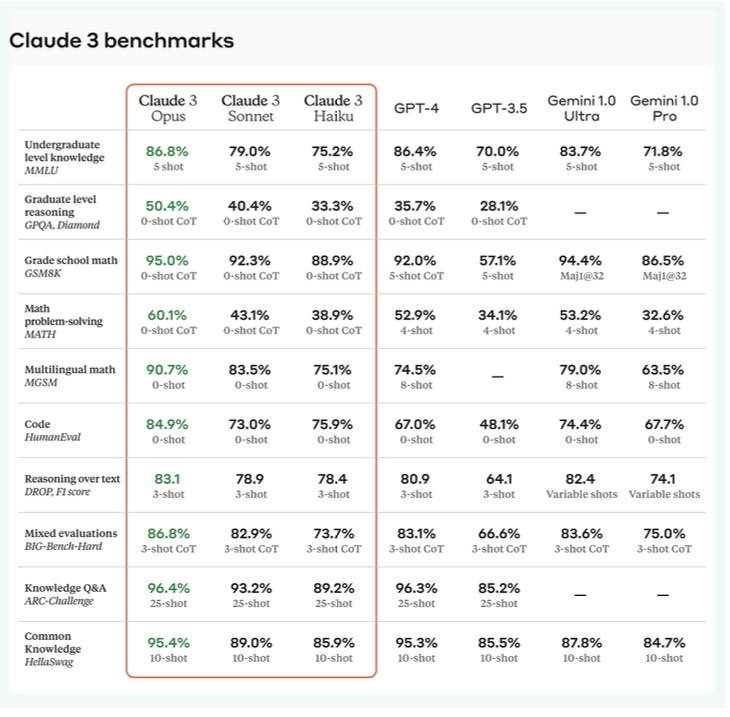

Interestingly, just this last week, Anthropic released Claude 3, which beats every other model including OpenAI’s GPT-4 and Google’s Gemini:

Now, the top tech companies in the world are spending hundreds of billions of dollars to advance the state of the art, confident that it will pay off because of the AI Scaling Law.

I share all of this with you because this AI Scaling Law is what convinced me to spend more and more of my time on AI proactively rather than waiting for it to inevitably force me to change.

Which brings me to my next point…

More Proof That The Near-Future Of AI Capability Might Be More Predictable Than We Might Think

One of the weird things about scaling LLMs is that they seem to develop emergent capabilities out of nowhere. This has made predicting the future of AI capability much harder.

Now, new research shows that this “surprise emergence” might be more of an artifact of how results are measured than actual reality. In other words, the future capabilities of AI might already be in the data today.

I found the topic fascinating enough to watch an hour-long academic presentation of the academic paper that explains this phenomenon. Then I created a 10-minute super cut of the video so you can get the main ideas with a lot of the technical stuff left out:

Source: Brando Miranda (Stanford)

Another Fascinating Trend In AI Capability To Watch

On March 5, The Maximum Truth Newsletter, released a fascinating chart about the IQ scores of various AI models…

What really made the chart land is the following tweet from AISafetyMemes:

For the first time in history, an AI has a higher IQ than the average human.

… along the extra context from Maximum Truth newsletter:

Look at the consistent progression:

1) Claude-1 was hardly better than random. It got 6 answers right, giving it ~64 IQ.

2) Claude-2 scored 6 additional points per test (worth ~18 IQ points).

3) Claude-3 scored yet another 6.5 points, worth ~19 more IQ points, bring it up to above the human average.The symmetric increases make me wonder if Anthropic is releasing versions based on internal benchmarks that happen to closely correlate with this IQ measure.

Let’s now consider the release dates on the versions:

1) Claude-1 March 2023

2) Claude-2 July 2023 (4 months production time)

3) Claude-3 March 2024 (8 months production time)

A very simple extrapolation suggests that we should therefore expect to get Claude-4 in 12 - 16 months [March-July 2025], and that it should get about 25 questions right per test, for an IQ score of 120.

After that, in another 16 - 32 months [July 2026 - November 2027], Claude-5 should get about 31 questions right, for roughly 140 IQ points. After that, in another 20 - 64 months after that [March 2028-November 2031], Claude-6 should get all the questions right, and be smarter than just about everyone. That’s 4 - 10 years out in total, adding up all the time periods. Of course, that progress is not a given. Anthropic could run up against budget constraints, energy constraints, regulatory constraints, etc. Then again, the relentless progress of Moore’s Law suggests that the patterns do have a decent chance of holding.”

What It All Means

We are in the quiet before the storm.

Technological change is going to keep moving faster and faster.

This change will impact almost every part of our work, culture, government, and lives. It will bring incredible opportunities and risks along with it.

Yes, the AI Scaling Law continuing is not guaranteed. It might suddenly plateau. But, no one in the field today has found a reason why it won’t.

Bottom line…

There’s now enough data to say that the most pragmatic course of action is to assume that it will continue for at leaset the next few years and plan accordingly.

Hey Michael!

Thank you for your effort in researching and teaching about AI and its connections to our common and routine lives.

I can see the power of AI, and as we used to discuss for long hours, we understand this is turning and will keep turning our lives upside down (in the good sense).

I've started my own research on AI and the manufacturing world (incredible things to come) and am about to start a new newsletter for manufacturing and operations managers (it's incredible what you can achieve using all of the new AI models) and your approach and teaching have helped me along the way!

So, thank you!

Dennis Serrano