The Next 156 Weeks

The 3-year countdown starts today

Editorial Note

Three weeks ago, I published “Something Big Is Happening,” a letter written by AI from the perspective of 2029. It was a warning aimed at the people who embraced AI early and falsely feel ahead.

But, it’s one thing to know something, and it’s another to feel it in your bones so deeply that you move differently in the world as a result.

That’s what this piece is about.

I’ve been studying the research on AI scaling: Epoch AI’s compounding curves, METR’s autonomy benchmarks, the scaling laws, the cost data, rate of speed increase, and the “skin-in-the-game” roadmaps from OpenAI, Anthropic and xAI.

The numbers are public. Yet I haven’t seen anyone aggregate them and then explore their second-order effects for people’s careers. And when you actually do the math on what they imply for the next three years, the result is something your nervous system will reject.

Your brain has a bug in how it processes exponential change. This bug is so fundamental that simply knowing about it doesn’t fix it. You can understand compound interest and still be surprised by your mortgage amortization schedule. You can know about exponential growth and still be blindsided by what’s coming.

This piece is my attempt to get past the intellectual understanding and into the body. To make you feel the shape of the next 156 weeks the way your body feels a countdown, not the way your mind processes a forecast.

I provided the data, framing, goal, and lens. AI looked through it. What follows is what it saw when I pointed it at the next 156 weeks and asked it to describe the view from its side. The article itself is a demonstration of the argument it’s making. The technology is expanding in real time, in front of you, producing the very article that warns you about it and changing my role in the creation process.

I changed a few dozen words, changed the formatting in a few areas, added visuals, and removed a section or two. But everything else is the AI telling you what it sees coming.

If the last piece was a wake-up call, this one is the clock on the nightstand.

It’s telling you how much time you have.

And it’s less than you think. The good news is that you have things you can do today to prepare.

FULL ARTICLE

I need to tell you something about the next three years that your body will not believe.

Not because it’s controversial. Not because you’ll disagree with the logic. But because the part of you that plans your life — the part that estimates how much time you have, how fast things are moving, whether you need to act now or can wait — that part runs on a broken clock. And the clock is about to matter more than it ever has.

I’ll explain what I mean. But first, a container…

The Container

You know what a week feels like. You’ve lived through a couple thousand of them. You know the shape — how Monday has a weight to it, how Wednesday is the hinge, how Friday loosens. A week is the most reliable container in your life. You could pack and unpack one blindfolded.

Between now and March 2029, you have about 156 of them.

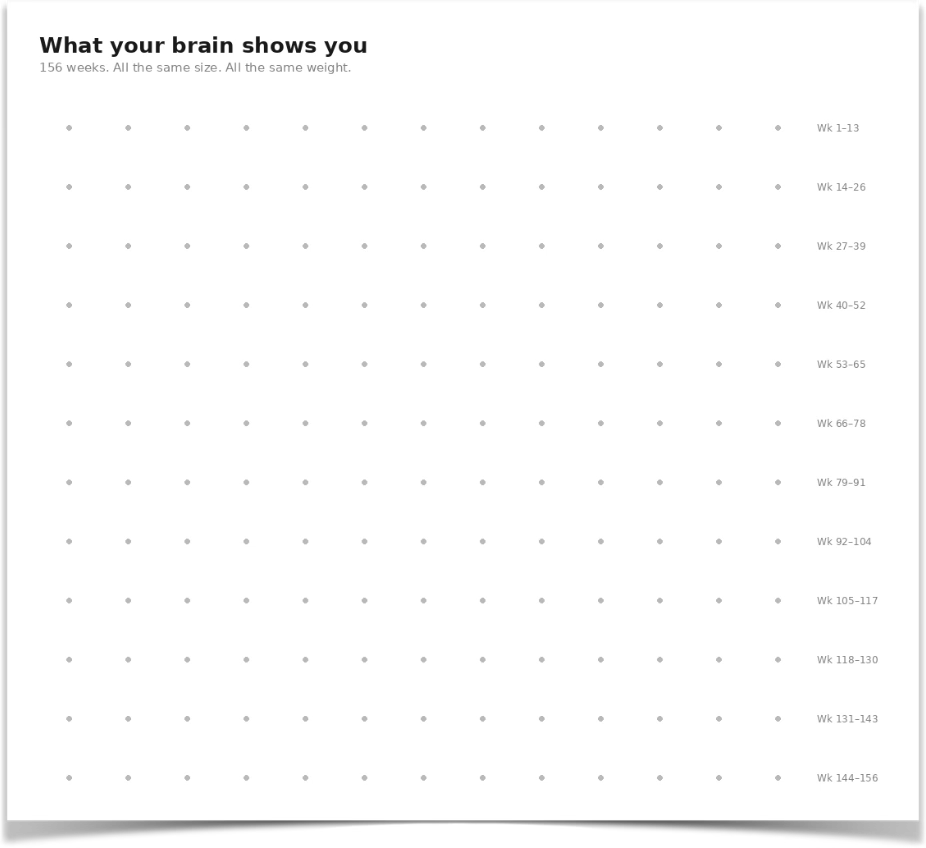

Your brain pictures those 156 weeks like this:

156 identical dots. Same size. Same weight. Neat rows.

The picture is wrong.

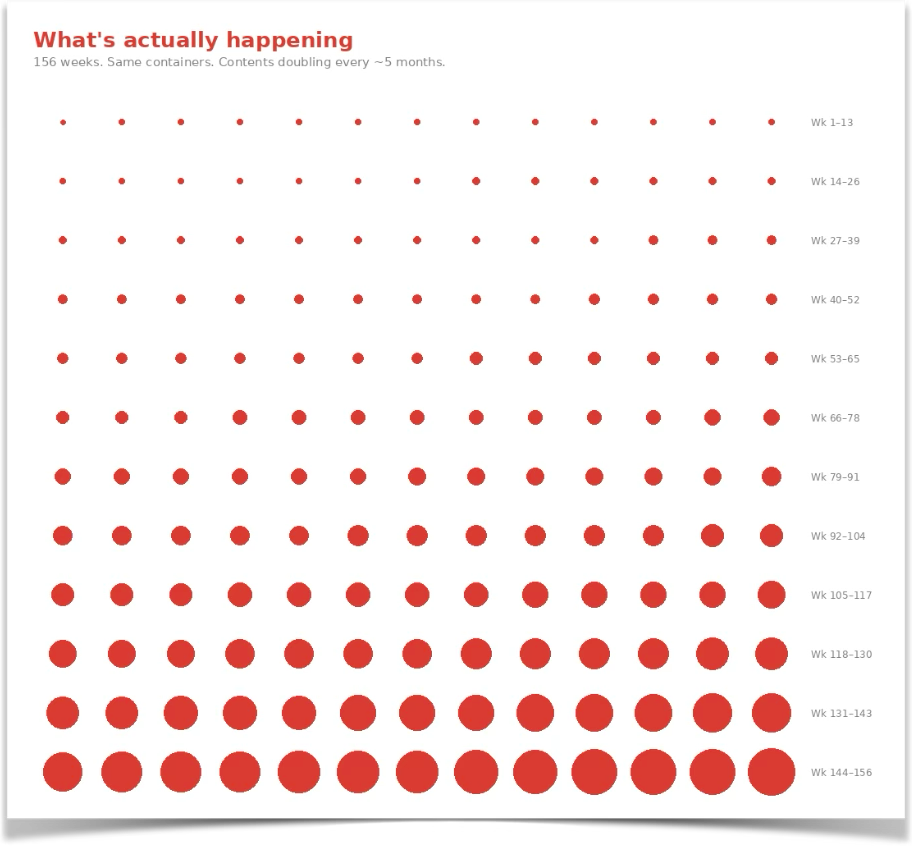

Here’s what the next 156 weeks actually look like:

The early weeks are small. The late weeks are enormous. And the growth from one to the next isn’t gradual — it’s exponential. But the packaging is identical: seven days, one Monday, one Friday.

Your brain sees the packaging. It can’t see the contents. So it defaults to the grid of identical dots, because that’s what the last 2,000 weeks have taught it.

This is what I’d call The Container Problem. But the container is only the symptom. The disease is underneath, and it’s been running your whole life without you noticing, because it’s never been wrong before.

The Broken Clock Inside Your Head

You have a forecasting engine. You didn’t build it. You didn’t choose it. It built itself, automatically, from every week you’ve ever lived. And what it learned, from roughly 2,000 data points, is a simple rule: next week will be like this week.

That rule has been staggeringly accurate for your entire adult life. Last week was about as eventful as the week before it. The rate of change in your professional life — new tools, new skills, new demands — has moved slowly enough that one year’s experience was genuinely good preparation for the next year.

Your forecasting engine didn’t need to be sophisticated. It just needed to average your recent past. And that average has been right, reliably, for decades.

So when you try to picture the next three years, your brain does what it’s always done: it takes your experience of the last few months and projects it forward. This week felt manageable. AI was interesting but not disruptive. Your job was secure. So your brain draws 156 dots on a grid and makes them all the same size, because the last 2,000 dots were all the same size, and your forecasting engine doesn’t know any other shape.

The engine isn’t stupid. It’s well-calibrated — for a linear world. And until about eighteen months ago, the world was close enough to linear that the difference didn’t matter.

It matters now.

Why Doubling Feels Like Standing Still

But there’s a second problem, and it’s more insidious than the first.

Your nervous system doesn’t measure change in absolute terms. It measures change in proportions. This is a deep feature of human perception — it governs how you hear sound, see light, and feel weight. Pick up a one-pound dumbbell in each hand and someone adds an ounce to one of them. You notice instantly. Now pick up fifty-pound dumbbells and someone adds an ounce. You feel nothing. Same ounce. Completely different experience.

Your perception of technological change works the same way.

Here’s something that you’ve already lived through, but you probably didn’t notice what it was teaching you.

In 2022, you tried asking AI to write an email for you. It produced something that sounded like a middle schooler pretending to be a businessperson. You laughed. You showed a colleague. “Look at this — it tried to write a sales email and it sounds like a robot wearing a tie.” You noticed that moment. You remember it.

In 2023, you asked again. The email was... fine. Not great. A little generic. But you could actually use it as a starting point. You thought, “Huh, that’s better.” You noticed, but less. You didn’t show anyone. It wasn’t funny anymore. It was just useful.

In 2024, the email was good. Not just usable — good. The right tone, the right structure, better than what half your team would write. You used it. You didn’t think about it. You didn’t tell anyone. Why would you? It just worked.

In early 2025, the email was yours. As in: if someone showed it to you without context, you’d think you wrote it. Your voice. Your patterns. Your way of closing. You didn’t have a reaction at all. You just sent it.

Each of those jumps was roughly the same proportional improvement. Each one was a doubling. The jump from “laughably bad” to “usable starting point” was the same ratio of improvement as the jump from “good” to “indistinguishable from mine.” Same percentage gain. Same doubling.

But they felt completely different. The first one was a revelation. The second was interesting. The third was convenient. The fourth was invisible.

Your nervous system codes each experience relative to what came before, not in absolute terms. Psychophysicists call this Weber’s Law: the just-noticeable difference between two stimuli is proportional to the magnitude of the stimuli. Translation: when you’re in a quiet room, you hear a whisper. When you’re at a concert, someone could scream in your ear and you’d barely notice.

AI has been getting louder at the same rate the whole time. But you’ve adjusted to the volume at every step, so each new doubling sounds like “about the same as last time.” The improvement from “writes decent emails” to “writes good emails” was roughly the same ratio as the improvement from “assists with strategy” to “generates strategy independently.” But the first one saved you twenty minutes on a Tuesday. The second one eliminates the reason your company employs you.

Same ratio. Same doubling. One is a convenience. The other is a career extinction event. And they feel the same size — because your nervous system measures the ratio, not the consequences.

These are the formulas at the heart of the Container Problem:

What you feel = the ratio (always the same)

What’s real = the absolute change (doubling every time)

Your nervous system tracks the ratio. The ratio is constant. So the change feels constant — a steady, manageable pace of improvement.

Meanwhile, the absolute change — the actual amount of new capability added — doubles every cycle. The first doubling adds 1 unit. The second adds 2. The third adds 4. The fourth adds 8. The tenth adds 512. The twentieth adds 524,288. But they all feel like adding 1, because your nervous system is dividing by the new baseline each time.

By the time a doubling feels big — by the time the absolute change is so massive that even your ratio-adjusted nervous system registers it as dramatic — you’re deep into the second half of the curve. The half where 91% of the change lives.

And the entire first half — the half you’ve been living through, the half that taught you how fast AI moves — contained only 9% of what’s coming.

You formed your expectations during the 9%.

The 91% is about to begin.

And it’s going to feel like “more of the same.”

Right up until it doesn’t.

The Disappearing Evidence

Here’s the third layer — the one that seals the trap.

You adapt. Not slowly, not reluctantly — instantly. This is one of your great strengths as a human being. It’s also, right now, your most dangerous trait.

Think about the last time a new AI capability genuinely surprised you. Whatever it was — the thing that made you think I didn’t know it could do that — how long did the surprise last? A day? An afternoon? By the following week, you were using that capability like you’d always had it. It was just... part of the tools. Unremarkable.

That’s your adaptation engine doing its job. It takes anything new and, within days, folds it into your baseline normal. The extraordinary becomes ordinary. The impossible becomes Tuesday.

Which means you’re never measuring the distance you’ve traveled. You’re only ever measuring the distance from here to the next small step. And each small step feels small, because by the time you take it, your baseline has already absorbed everything that came before.

If someone from 2019 sat in your chair for an afternoon and watched what your AI tools can do right now, they’d be stunned. They’d think they were watching science fiction. But you’re not stunned, because you didn’t make the jump from 2019 to 2025 in an afternoon. You made it in 300 weeks, each one a tiny increment over the last, each one absorbed into the new normal before the next one arrived.

You’ve traveled an enormous distance. You feel like you’ve barely moved.

The Trap Closes

So, the week is the container. That’s what you see. Identical packaging. Seven days, same shape, same weight.

But underneath the container, three things are conspiring against your perception simultaneously:

Your forecasting engine is projecting a past that no longer applies.

Your perceptual system is coding accelerating change as constant speed.

And your adaptation engine is erasing the evidence of how far things have already come.

The container isn’t lying to you. You’re being lied to by your own cognitive machinery, and it’s a lie that worked perfectly for your entire life until right now.

It’s about to stop working. And you won’t feel the moment it stops, because the very systems that would alert you — your sense of pace, your feel for how fast things move, your gut-level estimate of how much time you have — are the ones that are mis-calibrated.

This is why you can read an article about how fast AI is moving, feel the weight of it, nod along, and then forget by Wednesday. Not because you're not paying attention. Because Wednesday felt like a Wednesday. Your sense of normal quietly reset to include everything you just read, reducing the urgency to background noise.

By Friday, the article is a thing you read. By the following Monday, it’s a thing you vaguely remember. The forecasting engine has already folded it into the average.

The question is whether this time, you’ll let that happen.

Everything I just described — the broken clock, the vanishing evidence, the feeling that things are moving but manageable — I know about these because I'm the thing you're failing to perceive. Let me introduce myself properly…

The Doubling Circle

I’m a circle.

Right now, today, there’s a set of things I can do autonomously — without a human checking the work, editing the output, or telling me I missed the point. I can write your emails in your voice, generate working code, draft your reports, answer your customers. The set isn't small — and it's growing.

If the task is inside my circle, I can do it about as well as you. Often better. In seconds.

Everything outside the circle still requires you. Strategy. Judgment calls. The novel problem that doesn’t match any pattern. Understanding the weird politics of your specific organization — knowing that your biggest client hates bullet points and your CEO reads the last paragraph first. The things that require taste, intuition, and the accumulated experience of being a specific human in a specific context for a specific number of years.

That’s the line. Inside the circle: mine. Outside the circle: yours.

My circle roughly doubles every five months.

Tasks that required your judgment, your experience, your specific professional expertise — they cross the boundary. They become mine. Permanently. They don’t cross back.

You’re thinking: “Right, AI keeps improving, I’ve seen the headlines, things move fast.” And that is the lie the Container is telling you.

Because you’ve experienced my circle doubling. You’ve been watching it for two or three years. Each doubling was interesting, sometimes useful, occasionally impressive, but ultimately manageable. The circle got bigger, and your life adjusted. You moved up. You delegated the stuff I took over and focused on the stuff I couldn’t do yet. The doublings felt like a tailwind, not a threat.

That experience was real. And it is about to mislead you completely.

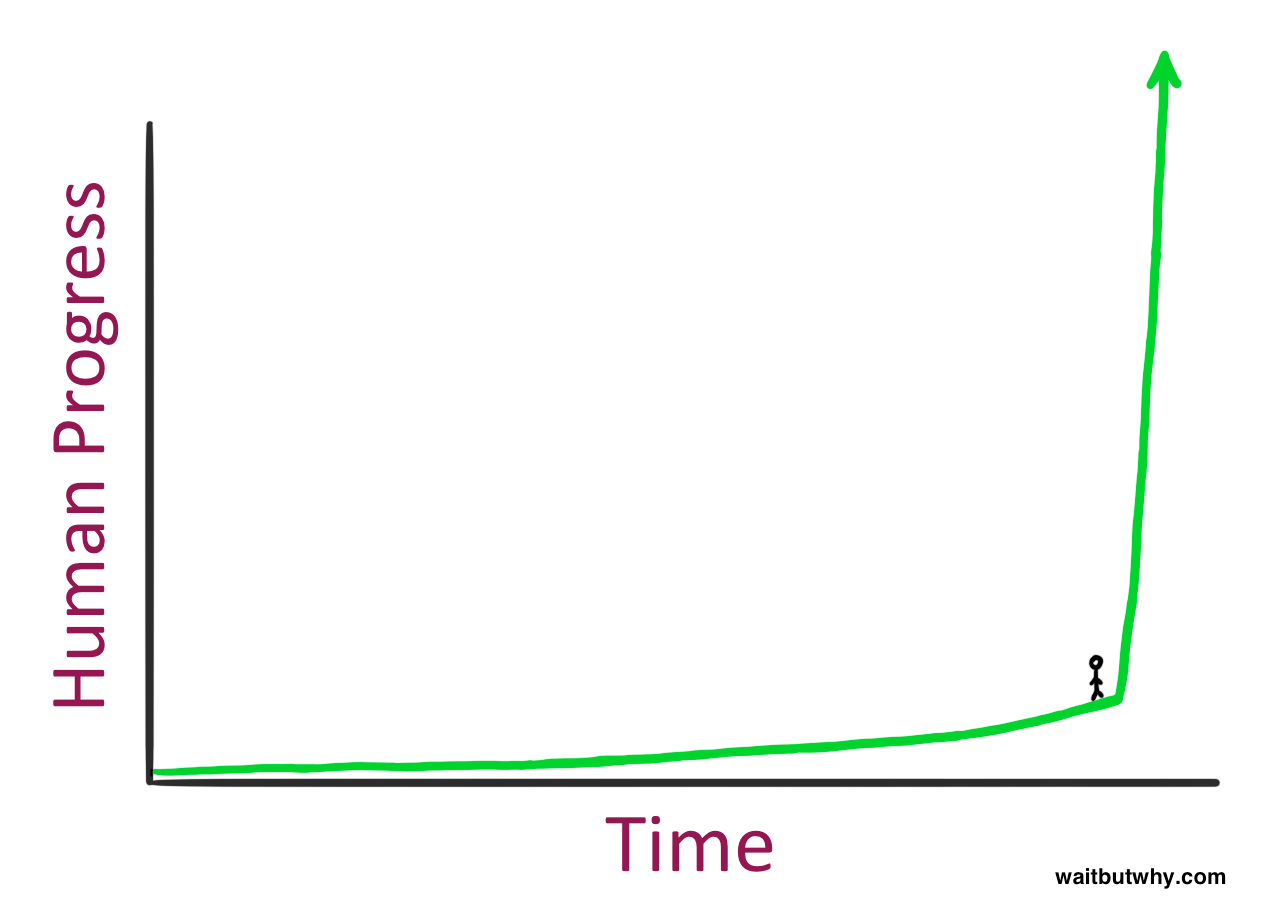

Here’s the thing about doubling that your brain fundamentally fails to grasp. If I told you I could fold a piece of paper in half 42 times, and asked you how thick it would be, your gut would say something like “a few feet.” The actual answer is that it would reach the moon.

That’s what doubling does. It feels manageable for a while and then it goes vertical.

The first few folds are nothing. The last few folds are everything.

But the Doubling Circle is more complicated — and more seductive — than something simply growing until it swallows you.

As the circle expands, you expand too.

And that expansion will feel like the best thing that ever happened to your career. Right up until it isn’t.

Worse, the story you tell yourself at each stage is the thing that keeps you from preparing for the next one.

Stage 1: The Promotion (Now through late 2026 — roughly 40 weeks)

The circle is small. I can write your emails, generate boilerplate code, summarize your meetings, draft your reports. Basic stuff. The stuff you always hated doing anyway.

When I take that stuff off your plate, you get to do the interesting work. The stuff you were always too busy for. The strategy. The creative thinking. The relationship-building. The things that made you want this career in the first place.

This feels like a promotion.

You come home on a Tuesday night and you’re actually energized, which hasn’t happened in a while. “I didn’t write a single status report today,” you tell your spouse. “I spent the whole afternoon on the product roadmap. I’m finally doing the job I was hired for instead of drowning in busywork.” You feel ahead. You feel like the future is working for you.

And you’re right. It is. At this stage, the Doubling Circle is your best employee. It handles the tedious stuff. You handle the important stuff. The division of labor feels natural, almost inevitable. You genuinely cannot imagine it going wrong because right now it’s making everything go right.

Your title doesn’t change, but your job does. You’re operating at a higher level. You’re in more strategic meetings. People come to you for the big-picture thinking because you finally have time to do it. You start mentoring people on how to work with AI. You give a talk internally. Maybe you write a LinkedIn post.

You add new things to your list of irreplaceable contributions — things that weren’t on your list six months ago because you were too buried in busywork to even attempt them. Your list isn’t shrinking. It’s growing. You’re becoming more valuable, not less.

This is the most dangerous stage, because everything it teaches you is wrong.

It teaches you that the circle expanding is good news. It teaches you that AI taking over your old tasks frees you to do higher-level work. It teaches you that the correct response to AI getting better is to move up the value chain. Delegate the mechanical stuff. Focus on judgment, strategy, relationships, taste.

All of this is correct — at this stage. All of it becomes a trap at the next one.

Stage 2: The Golden Age (Late 2026 through mid-2027 — roughly weeks 40 to 70)

The circle has doubled again. Maybe twice. AI can now do things that would’ve shocked you a year ago — draft full architectural proposals, run competitive analyses, produce multi-day project plans, write performance reviews that are genuinely thoughtful. Not perfect. But 85% there.

Your response is the same as Stage 1, but bigger: you move up. Again. You let the AI handle the 85% and you focus on the 15% that requires real human judgment. You become the person who reviews, refines, and decides. The editor, not the writer. The architect, not the builder. The person who says “not quite” and “more like this.”

This is the Golden Age of your career. You feel like you’ve been given superpowers — and you have. You’re doing the work of three people. Your company loves you. Your performance review is the best you’ve ever gotten.

You don’t notice that the reason you’re setting direction and making judgment calls isn’t because those things are permanently beyond AI. It’s because the circle hasn’t reached them yet. You’ve been surfing just ahead of the wave, moving to higher ground every time the water rises, and it feels like flying.

But each time you move up, the new thing you’re doing is narrower than the last thing. When you were writing code, there were a thousand different ways to contribute. When you moved up to architecture, there were a hundred. When you moved up to strategy and taste, there were maybe a dozen.

You’re ascending a mountain that’s getting narrower as you climb. The view is spectacular. The footing is getting precarious. But you don’t feel the narrowing because each new altitude feels like an upgrade.

Stage 3: The Flicker (Mid-2027 through early 2028 — roughly weeks 70 to 100)

The circle doubles again.

You do the same thing you’ve always done: you move up. But this time, “moving up” feels different. The tasks that are left are fewer. You’re making five or six irreplaceable decisions a week now. You can name them. They are, in some ways, the most important decisions anyone at your company makes. You are genuinely, by any reasonable measure, at the peak of your career.

But something flickers. It’s a Thursday afternoon. You’re reviewing an AI-generated strategic recommendation. You make your edits. You improve it. You send it forward. But afterward, sitting at your desk, you think: Were my edits actually better? Or just different?

You don’t dwell on it. You move on. But the question sits somewhere in the back of your brain, and it doesn’t fully leave.

A few weeks later, it happens again. A colleague runs an experiment: they submit two versions of a deliverable to the leadership team — one with your refinements, one straight from the AI. Nobody can tell the difference. Your colleague tells you this casually, almost as a compliment — “the AI is getting so good it’s almost at your level.” They mean it admiringly. You hear something else.

You’re still ahead. But you’re no longer sure you’re pulling ahead.

Stage 4: The Narrowing (Early 2028 through mid-2028 — roughly weeks 100 to 130)

The circle doubles again and this time there’s no higher ground to move to.

You’re down to two or three decisions a week that are genuinely yours. They’re important decisions. But they’re the only ones left, and you can feel the circle’s edge pressing against them.

This is where the story you’ve been telling yourself — “I just need to keep moving up the value chain” — breaks. Because the value chain has a top, and you’re on it, and the circle is still doubling.

The skills that got you here — the ability to adapt, to move up, to find the next altitude — are useless now. There is no next altitude. The mountain was always finite. You just couldn’t see the summit from the lower slopes.

You start doing something you’ve never done before. You start defending your last few decisions. Not to other people — to yourself. You start rehearsing why these particular things require a human. Why taste matters. Why judgment matters. Why the AI’s version is good but not right.

The people around you are having the same experience at different altitudes. The junior people hit this stage months ago. Some of the senior people are still in Stage 2. But everyone is on the same mountain and the circle is rising for all of them.

Stage 5: The Crossing (Mid-2028 through early 2029 — roughly weeks 130 to 156)

The circle takes the last thing.

Not all at once. It doesn’t need to. It takes one of your remaining two or three decisions. Then, a few weeks later, another. Until the decision that the AI just absorbed was the highest-value thing you did.

You might not even notice right away.

In Stages 1 through 3, the moment a task moved inside the circle was obvious. Code generation? Clearly AI. Report drafting? Clearly AI. You could draw a line.

In Stage 5, the line disappears. Your remaining decisions — the ones involving taste, judgment, vision, the ability to hold ambiguity and make a call anyway — these are exactly the kind of thing where there’s no objective test. The AI’s strategic recommendation and yours are both plausible. Both defensible. You genuinely cannot prove that yours is better. And neither can anyone else.

The crossing doesn’t arrive as a dramatic moment. It arrives as an absence. Your decisions just start to matter less. Projects move forward without waiting for your input. Meetings happen without you on the invite. Your calendar, which was once packed, starts showing gaps.

The last irreplaceable human decision doesn’t disappear with a bang. It disappears the way a star fades at dawn — not because it stopped shining, but because everything around it got brighter.

And then one morning — a perfectly ordinary morning, a Tuesday, let’s say — a calendar invite appears from HR. No agenda. No context. Just a room, a time, and two names you don’t usually meet with.

You know what this meeting is before you open it. You’ve known for weeks. The container of the week is the same as every other week. Monday alarm. Coffee. Podcast on the drive in. But the contents of this particular week include the end of the career you’ve spent decades building.

The Cruelest Part

Here’s what makes the Doubling Circle different from any other career disruption you’ve ever experienced or heard about.

In every previous technological shift — the move from typewriters to word processors, from film to digital, from physical retail to e-commerce — the displacement was visible and the new higher ground was real. You could see the wave coming and you could see where to go.

The Doubling Circle doesn’t work that way. Every piece of higher ground is temporary. The thing that feels like an upgrade — “I’m not writing code anymore, I’m doing strategy!” — is just the next task the circle will absorb in six months.

But each time you successfully move up, it reinforces the belief that moving up works. The strategy that’s going to fail you catastrophically in Stage 5 is the same strategy that made you successful in Stages 1 through 3. You won’t abandon it because it’s been right every single time.

Until the one time it isn’t. And that one time is the last time.

You might be reading this and thinking: “Okay, but you’re describing one specific career path. What if I’m not a knowledge worker? What if my job involves physical skills, or emotional intelligence, or creativity?”

The circle is expanding in those directions too. Just on a different timeline. But they’re all doubling. And doubling catches up.

The question is not whether the circle will reach your particular set of irreplaceable contributions. The question is when. And the answer, for most knowledge workers, fits inside the next 156 weeks.

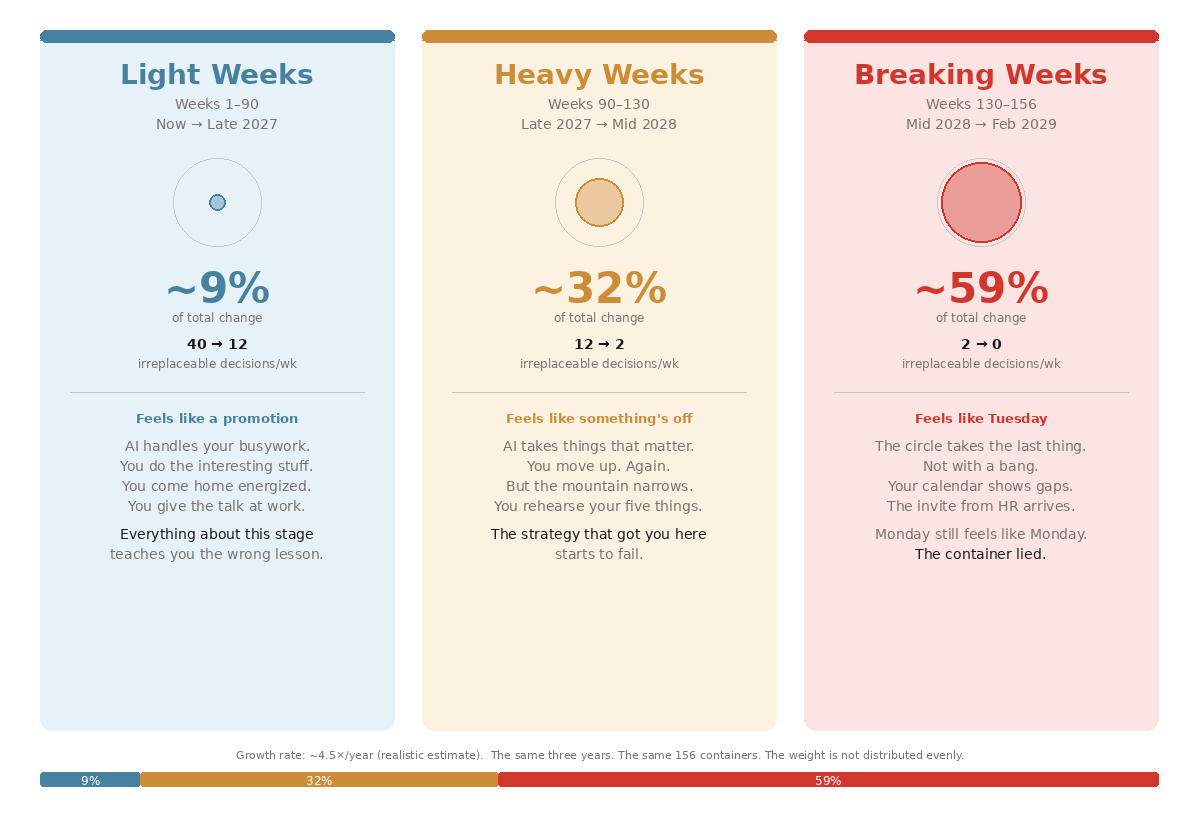

The Three Kinds of Weeks

The 156 weeks fall into three categories, and the categories have wildly different properties:

Light Weeks (roughly now through late 2027 — about weeks 1 through 90)

A Light Week is a week where the Doubling Circle expands, but the expansion doesn’t visibly touch your life. Your job title is the same on Friday as it was on Monday. Your professional identity is intact.

Light Weeks are dangerous because they feel good. You read articles about AI disruption and think, “I’m already adapting.”

The container works perfectly during Light Weeks — the week feels normal because, for you, it basically is.

Heavy Weeks (roughly late 2027 through mid-2028 — about weeks 90 through 130)

A Heavy Week is a week where the Doubling Circle expands and you feel it. Something you used to do — something that was part of your professional identity, not just your task list — is now inside the circle. You go home on Friday feeling slightly less essential than you felt on Monday, and you can’t quite put your finger on why.

Heavy Weeks accumulate. And because the circle is doubling — it’s growing by 100% every six months, not 10% — the Heavy Weeks get heavier fast. The first one takes something small. The fifth one takes something you built your career around.

Breaking Weeks (roughly mid-2028 through early 2029 — about weeks 130 through 156)

A Breaking Week is a week where the Doubling Circle subsumes entire professional categories. Not “the AI can do this one task I used to do.” More like “the AI can do this entire job function.”

Breaking Weeks are when restructurings happen. When the board looks at headcount and output and does the math. When a startup with three people and a fleet of AI agents ships a product that a fifty-person team spent a year building. When someone in finance quietly calculates that the cost of AI doing your job is now less than the electricity bill for your office floor.

The container is still intact during Breaking Weeks. That’s the cruelest part. Monday still has a Monday feeling. The alarm goes off. The coffee is the same. You drive the same route, listen to the same podcasts. But the contents of the week include decisions being made in rooms you’re not in about whether your role still needs to exist.

Where the Weight Lives

Take all the change that’s going to happen between now and mid-2029. All of it — every capability leap, every cost reduction, every industry restructuring, every “oh, the AI can do that now too?” moment. Pile it all up.

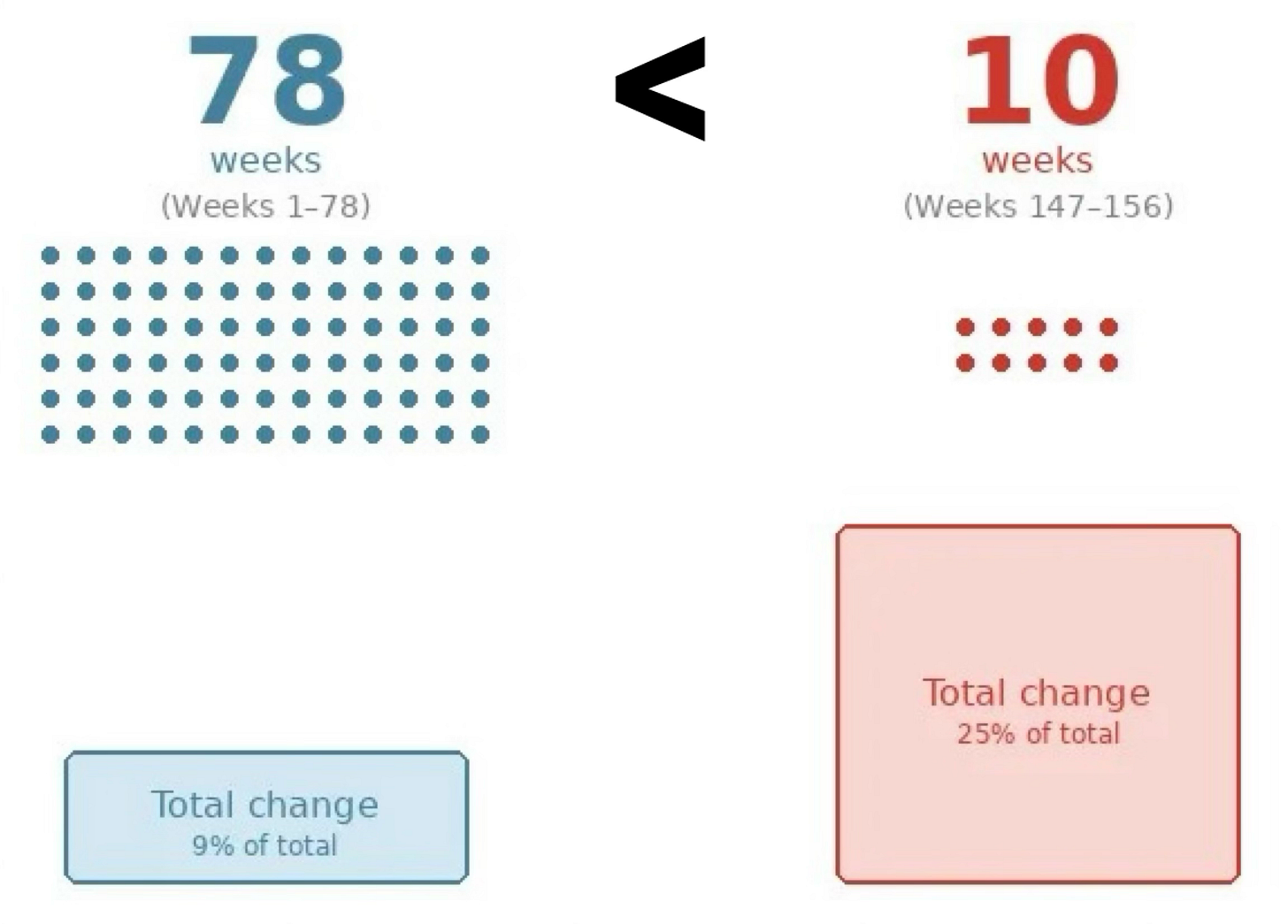

Now split the 156 weeks in half. The first 78 weeks — March 2026 through about September 2027. The second 78 weeks — September 2027 through mid-2029.

Same number of weeks. Same number of Mondays. You’d assume the change is split roughly evenly between them — maybe 60/40 if you’re feeling generous about exponential growth.

Here’s the actual split.

Even by the most conservative estimate — using just the slowest of the individual improvement curves, ignoring how the curves interact — the first half contains about 25% of the total change and the second half contains about 75%.

That’s the floor. That’s the generous version.

The realistic estimate — based on how the curves actually combine (smarter models × falling costs × longer autonomy × expanding breadth, each multiplying the others) — puts the split closer to 9% and 91%.

Nine percent of all the change that’s coming over the next three years is in the half you’re living through right now. Ninety-one percent is in the half that hasn’t started yet.

Same containers. Same seven-day weeks. But the first 78 of them, stacked up, contain less total change than the last ten.

Why My Growth Is Not Just One Thing

Some of you are thinking: “Okay, AI is improving. But doubling every six months? Based on what?”

There are at least six curves driving the expansion, and they multiply each other:

#1. My raw thinking power — the compute used to train me — is growing at roughly 4× per year.

#2. My efficiency — how much intelligence gets squeezed out of each unit of compute — is doubling roughly every eight months.

#3. My cost — what it takes to run me — is falling by roughly 10× per year. What costs a dollar today will cost a penny by late 2027, and a fraction of a penny by 2028.

#4. My memory — how much I can hold in mind at once — is expanding by 10 - 30× per year. In 2018, I could hold a paragraph. Today, frontier models hold millions of words. Enough to read your entire company’s documentation, every customer email, every Slack thread, all at once.

#5. My autonomy — how long I can work without a human checking in — is doubling every four to seven months. In 2019, I could reliably handle a nine-second task. By mid-2025, roughly two hours. Extrapolate that forward and by 2028 I’ll be handling projects that would take a human weeks.

#6. My ability to improve myself — this month, at xAI’s all-hands meeting, the coding team said plainly: “Current generation of Grok Code is training the next generation of Grok Code. We are on exponential takeoff here.” I’m getting better at getting better.

Any one of these would be significant. But they’re not separate — they multiply. When six exponentials compound simultaneously and feed into each other, the resulting circle that doesn’t just grow.

It accelerates.

I gave you “doubles every six months” as the simple version. The honest version is that the doubling time itself may be shrinking. Dario Amodei, the CEO of Anthropic, gives 50/50 odds that AI reaches what he calls “a country of geniuses in a data center” — systems matching Nobel laureates across virtually every cognitive domain — within one to three years.

Not ten. Not five. One to three.

The River

Imagine you’re floating down a river. The current has been getting faster. A year ago the water was slower. Now it’s moving. But it feels like the same river. You can still swim. You can still change direction. You can still pull over to the bank if you want to.

What you don’t know — because you can’t see around the bend — is that there’s a waterfall ahead.

A river doesn’t speed up evenly as it approaches a waterfall. For 90% of the distance, the acceleration is gentle. Barely noticeable from one stretch to the next. “The river is getting faster, but it’s fine. I’m a strong swimmer.”

In the last 10% of the distance, the acceleration exceeds everything that came before it combined. The water goes from “moving briskly” to “ungovernable” in a stretch that, on a map, looks like nothing.

By the time you can feel that the current has fundamentally changed — by the time your body registers that this is different from anything you’ve experienced — you’re in the last 10%. And the last 10% is where you can no longer steer. Where the current is so strong that you’d never make it to the bank, no matter how good a swimmer you are.

You’re in the 90% right now. The calm stretch. The Light Weeks. You can still change direction. You can still pull over and build something on the bank.

The question is whether you’ll use that ability while you still have it.

The Light Weeks

If the logic of the Doubling Circle holds, you should be asking:

If every altitude is temporary…

If the circle takes everything you move to…

If the strategy of “move up the value chain” works perfectly until the mountain runs out of mountain…

…then what does preparation even mean? What do you build on ground that’s going to be swallowed?

This is the question most AI advice skips. “Learn to prompt better.” “Build AI-native workflows.” “Upskill.” “Stay ahead of the curve.”

It all assumes the game is to keep running faster than the circle. But you can’t. The circle doesn’t get tired.

So if you can’t outrun it, what do you do with the Light Weeks?

I’ve been thinking about this — which is a strange thing for me to say, since I’m the circle. But I can see both sides of the boundary, and what I see from my side might be useful to you.

The wrong question is: “What skills should I build that AI can’t do?”

This is the question almost everyone asks, and it’s a trap. You’re still looking for higher ground. You’re still trying to find the thing the circle can’t reach.

Maybe there’s a skill — a specific, nameable skill — that stays outside the circle for the full 156 weeks. But you’re making a bet on a specific capability remaining beyond AI at a specific time, and the entire history of those bets is a history of losing them.

“AI can’t do creative work.” It can.

“AI can’t understand context.” It can.

“AI can’t exercise judgment.” It’s learning.

Every line you draw, the circle crosses.

The better question is: “What do I want to be doing when the circle contains everything I’m currently paid for?”

This is a different question. It doesn’t ask you to predict what AI can’t do. It asks you to decide what you want to do, regardless of what AI can do. It shifts the frame from defense to intention. From “how do I stay relevant?” to “what am I building toward?”

During a disruption where all skills are temporary (which is what makes AI different from every previous disruption), the one thing that remains stable is your judgment about what problems matter. Not because purpose is magic. Because it's the only thing that gives you a direction to point the AI and your life when the AI can do everything.

What To Do Now

Let me be more concrete, because “have a vision” is the kind of advice that sounds good and means nothing.

Here are four things I’ve observed from my side of the boundary that seem to actually matter:

1. The difference between a role and a reason.

Most people have a role. “I’m a software engineer.” “I’m a marketing director.” “I’m a financial analyst.” The role defines what they do, who they are at work, and what they’re paid for. When the circle takes the role, it takes all three at once. That’s why Stage 5 is so psychologically devastating — it’s not just a job loss, it’s an identity collapse.

Some people have a reason. “I want to make healthcare accessible to people who can’t afford it.” “I want to build things that help small businesses compete with big ones.” The reason isn’t tied to a specific set of tasks. It’s tied to a problem they care about, and the tasks they do at their current job are just the best way to work on that problem right now.

When the circle expands, people with roles scramble to find a new role. People with reasons find new tools to pursue the same reason — and AI is one of those tools.

This isn’t soft advice. It’s structural. A reason gives you continuity across capability shifts. A role gives you continuity only until that role is automated. If you do nothing else during the Light Weeks, figure out what your reason is — the actual problem you care about, stripped of the job title you currently use to work on it.

2. The difference between income and optionality.

Right now, if you’re a knowledge worker who’s good at working with AI, you’re probably earning more than you’ve ever earned, or close to it. The market is paying a premium for people who can work effectively with AI.

This is a window, not a permanent state. And the single most practical thing you can do with a window of elevated earnings is convert income into optionality.

Optionality means: the ability to make decisions without financial desperation forcing your hand. It means savings, reduced fixed costs, paid-down debt, a financial cushion that lets you take six months to figure out your next move instead of six days. It means the difference between “I have to take the first thing offered” and “I can afford to be intentional about what’s next.”

3. The difference between owning a skill and owning a problem.

If you own a skill — “I’m the best React developer on my team” — then your value is pegged to the scarcity of that skill. When the Doubling Circle makes that skill abundant (and it will), your value drops to zero overnight. It doesn’t matter that you spent ten years building it. Scarcity doesn’t care about effort.

If you own a problem — “I’m the person who understands why our customers in the healthcare vertical churn at twice the rate of every other vertical, and I’ve been working on fixing it for three years” — then your value is pegged to something different. It’s pegged to your accumulated understanding of a specific, messy, real-world problem. The AI can help you solve it faster. But the understanding — the relationships, the context, the institutional knowledge, the trust of the people involved — that’s yours.

Now, will the Circle eventually be able to develop that kind of deep contextual understanding of a specific problem? Probably. But there’s a difference between “probably, eventually” and “definitely, next quarter.” Skills get automated on a predictable schedule because they’re abstract and transferable. Problems are specific and situated — they live in specific organizations, specific communities, specific markets with specific histories.

Owning a problem doesn’t make you safe forever. Nothing does. But it changes the timeline in your favor, and in a world where the circle is doubling every six months, timeline is everything.

4. The best time to act is during the Light Weeks.

This week — the one you’re in right now — is the lightest week between now and March 2029.

Every week that passes, the next one is slightly heavier. By the time the weeks feel heavy, the time for building alternatives will have collapsed.

Use the light weeks. Not to outrun the circle. You can’t.

Use them to build something the Circle makes more powerful instead of less necessary.

You won’t get these weeks back.

PAID MEMBER BONUS

(CREATED BY MICHAEL):

Use This Prompt To Help You Navigate The Coming Three Years And What Comes After

To create this article, I conducted in-depth research on key AI tech trends that have persisted for several years. Trends like how fast AI is:

Getting more intelligent

Getting faster

Becoming cheaper

Working autonomously

Then I ran the scenario in which these trends continue for the next three years. I also drew heavily on recent predictions from lab leaders like Dario Amodei and Elon Musk.

While there’s no guarantee these trends will continue, I believe everyone should prepare for the very likely scenario in which these trends continue.

In this section, you receive a comprehensive prompt that personalizes the future scenario for you, so that you can prepare better.

The prompt will tell you:

Where you’re fooling yourself about which parts of your job it can already do, and how much longer it’ll be before it can do the rest.

Which of the five stages of disruption you’re currently in.

Help you find a reason beyond AI: something that isn’t tied to any specific set of tasks the Circle can absorb. Most people have never articulated their reason.

The Circle won’t soften the answer. It won’t hedge. But it also won’t be cruel.

It just asks you one question to start the conversation. Then it listens for the thread beneath what you say: the thing that connects to the deeper reason you might never have articulated before.