The Chat Trap: Why the Smartest AI Users Are Working the Hardest

You haven't hit a skill ceiling. You've hit an invisible structural one. Here's how to see it, and how to cross it.

Author’s Note

When I was 16, a book called Unleashing The Ideavirus rewired my brain. Seth Godin said one thing that stuck with me: ideas don’t just happen to spread. They’re engineered to.

That idea sent me on a 25-year obsession.

I spent thousands of hours dissecting why certain ideas break through while seemingly good ones die in obscurity. As a result, I was able to go from a failing blog to publishing articles that have been read cumulatively over 100 million times across Forbes, Fortune, TIME, the World Economic Forum, and the Harvard Business Review.

But my comprehensive approach to reverse-engineering had a cost. On average, a single article took 60+ hours. The method demanded exhaustive cross-disciplinary research and 15 drafts per article. Two tradeoffs haunted every project:

Quality vs. quantity

Augmentation vs. automation

I opted for quality over quantity and augmentation over automation. Every article was a chance to follow my curiosity and grow.

I accepted those tradeoffs for a decade.

This year, Claude Code + Opus 4.6 made them obsolete. Now, it’s possible to get the best of both worlds.

This article is the proof.

It was created using an AI thought leadership system that produces high-quality content quickly, at scale. The system that 16-year-old me was actually looking for, even if he didn’t know it yet.

For the first time ever, I’m now sharing that system with a small group of pioneering entrepreneurs and senior executives at $1M+ companies who want it installed for themselves, their employees, or their company. If you’re interested, reply with BLOCKBUSTER in the comments or to this email, and I’ll send you more details.

Now on to the article…

Quick Summary

I believe that the most important AI decision you make this year is to move from the chat paradigm (ChatGPT, Gemini, Grok, Claude.AI) to the agentic paradigm (Codex, OpenClaw, and Claude Code).

I’ve now talked to dozens of people about how they’re making this decision. And there are a ton of misconceptions.

For example:

Many think that Claude Code is just for programmers. It’s not.

Many feel overwhelmed by all the new tools and think Claude Code is like the others. It’s not. It’s way more important.

Many love the Chat paradigm, and they don’t realize the hidden taxes they’re paying and the opportunities they’re missing.

This article provides one of the most comprehensive overviews of the difference between the two paradigms, so you can make your most important AI adoption decision better.

The rise of the agentic paradigm is the new ChatGPT moment, and adopting it sooner will have a profound impact on your life.

Consume In Your Preferred Format

Slide Deck

Audio

The audio doesn’t just narrate the text in the article below. It actually creates a script tailored to the listening experience, so you can easily consume the ideas while driving, taking a walk, or doing chores.

Full Article

In the 1880s, the electric motor arrived in American factories. The factory owners did exactly what you’d expect. They ripped out their steam engines and dropped electric motors in the same spot. One giant motor in the center of the building, connected to the same system of shafts, belts, and pulleys that had distributed power from steam.

The result? Almost no productivity gain. For thirty years.

Economists Paul David and Chad Syverson documented this lag extensively. Factories got a better power source but kept the old architecture.

To see why that mattered, picture a steam-powered factory.

One massive engine in the basement spun a central shaft running the length of the building. Leather belts dropped from that shaft to every machine on the floor. If a machine wasn’t within reach of the belt, it didn’t run.

That single constraint dictated everything. Machines were arranged by proximity to the shaft, not by the logic of the work. Workers carried half-finished parts back and forth across the room because the layout served the power source, not the product.

Then came the electric motor. And for thirty years, factories just swapped it in — tearing out the steam engine and running the same shaft off a big electric one. Faster, cleaner, structurally identical.

The constraint was gone. The behavior wasn’t.

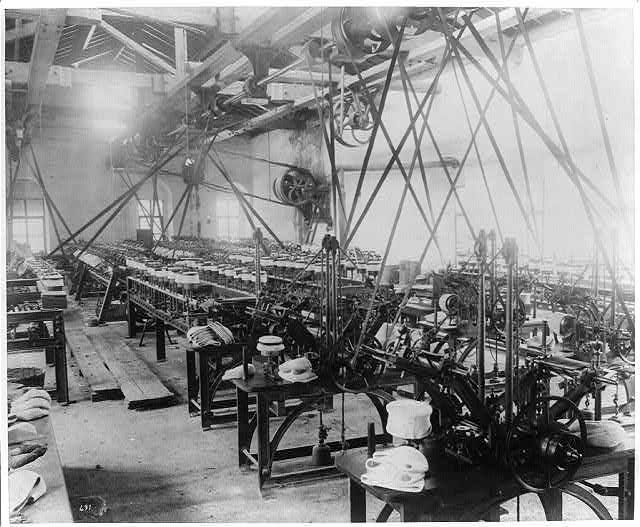

It took a full generation before Henry Ford realized the obvious. Electric motors didn’t need to be centralized. You could put a small motor on each machine. And once you did, you could rearrange the entire factory floor around the flow of work instead of the flow of power. The assembly line was born:

That’s when productivity exploded. Not when the technology arrived, but when the architecture changed to match it.

As the economic historian Paul David observed:

The productivity gains from electrification achieved their full flowering only after manufacturers ceased trying to adapt dynamo technology to the mechanical drive factory and began constructing factories around the new technology.

Think about what David is really saying here. The technology wasn’t the bottleneck. The mental model was. Factory owners kept trying to make a revolutionary technology fit inside a pre-revolutionary structure, and then wondered why it didn’t feel revolutionary.

This pattern didn’t just happen once. It happens every time a revolutionary technology meets an old way of working.

What’s going on here?

Why do smart people keep making this same mistake?

And what does it have to do with how you’re using AI right now?

The Pattern That Keeps Repeating

The factory story isn’t unique. The same pattern (revolutionary tool, old architecture, disappointing results) repeats across every domain I’ve studied...

Exhibit #1: Personal Computing.

The first spreadsheet users treated VisiCalc like a faster calculator. They’d compute one cell, write down the answer, clear the screen, and compute the next. It took years before people realized the power wasn’t in any single calculation. It was in the connections between cells. The same data updated everywhere simultaneously. The spreadsheet wasn’t a better calculator. It was a different category of tool entirely. But only if you stopped using it like a calculator.

Exhibit #2: Photography.

When digital cameras first appeared, professional photographers used them exactly like film cameras. One shot, careful composition, review later. They didn’t exploit the fact that digital eliminated the cost of experimentation. It took a new generation of photographers, people who’d never internalized the “film is expensive” constraint, to discover that the real advantage was shooting thousands of frames and finding the one that captured something no amount of careful composition could have planned.

Exhibit #3: Medicine.

When electronic health records replaced paper charts, most hospitals just digitized the paper. Same forms, same workflows, now on a screen. The result was that doctors spent more time on documentation, not less. It wasn’t until systems were redesigned around what digital made possible (shared records, automated alerts, pattern detection across thousands of patients) that the technology delivered on its promise.

Exhibit #4: Music Production.

When digital audio workstations replaced analog tape, the first generation of producers used them as better tape machines. Record, edit, mix. Same linear workflow. The breakthrough came when producers realized digital audio could be non-linear. You could remix, layer, and restructure infinitely without degradation. That insight created entirely new genres of music.

The pattern is always the same.

A new technology arrives. People use it to do the old thing slightly faster. They get modest improvements and a lot of new frustrations. Then someone realizes the technology enables an entirely different way of working. And that’s when the real transformation happens.

Bottom line: The value of a revolutionary tool is never in doing the old thing better. It’s in doing a new thing that the old tool made impossible.

And right now, with AI, almost everyone is still doing the old thing.

Including me. That is, until the arrival of Claude Code...

The Chat Trap: Four Walls That No Amount Of Prompting Can Fix

Most AI advice for the past three years has boiled down to one idea: get better at the conversation.

Write clearer prompts.

Provide more context.

Learn the right frameworks for talking to AI.

This advice isn’t wrong. But it has a ceiling. And if you’re reading this, you’ve probably already hit it.

The ceiling isn’t about your skill level. It’s about the category of tool you’re using. Conversational AI (ChatGPT, Claude Chat, Gemini, Grok) has four structural limitations that no amount of prompting expertise can overcome. I call them The Four Walls.

For most people, these four walls are invisible. As a result, most knowledge workers are resistant to moving over to Claude Code.

Chat Wall #1: The Copy-Paste Tax

Here’s a workflow that might sound familiar.

You have a brilliant conversation with Claude about your next article. The AI produces excellent structural suggestions, a compelling hook, and three cross-domain examples you hadn’t considered. Great output.

Now what?

You copy the relevant pieces into a Google Doc. You reformat them. You open a new chat to work on a different section. You paste in the context from the first conversation so the new chat understands what you’ve already decided. You get new output. You copy that into the Doc too. You realize the AI in the second chat contradicted something from the first chat. Of course it did. They don’t know about each other.

By the end of this process, you’ve spent as much time managing the information flowing in and out of AI as you spent doing the creative work itself.

Let me put some rough numbers on this. A typical heavy Chat user might experience the following friction on any given day and not even realize it:

Re-establishing context: 4-6 times per day, ~5 minutes each = 20-30 minutes. Pasting in your voice guide, your project requirements, reminding the AI what you already decided in a different chat.

Copy-pasting between chats and documents: 15-20 transfers per day, ~3 minutes each = 45-60 minutes. Copying output into Google Docs, pasting context from one chat into another, reformatting along the way.

That’s over an hour per day of pure overhead. Not creative work. Not thinking. Not producing. Just shuttling information between tools that can’t talk to each other.

You didn’t eliminate busy work. You traded one kind for another.

Chat Wall #2: The Upload Wall

You have 200 research notes. Or a decade of journal entries. Or an archive of 500 podcast transcripts. You want AI to work with all of it. Find patterns. Surface connections you’ve missed.

But Chat only lets you upload about 20 files at a time. In specific formats. With size limits.

So you manually select a batch. Convert any files that are in the wrong format. Upload. Wait. Run your prompt. Then do it again for the next batch. And even after you get files in, Chat can only read them. It can’t modify the originals. Every output is a copy that you have to manually merge back.

You’ve become a human file shuttle. Selecting, converting, uploading, downloading, merging. Over and over. So that AI can access the information that’s already sitting right there on your computer.

This is the equivalent of the factory owner who had to manually carry materials from one machine to the next because the floor layout was designed around the central shaft, not the flow of work.

Chat Wall #3: The Lock-In

Your files can’t get in easily. But here’s the other side of that problem: your work can’t get out.

Every insight you’ve had, every framework you’ve refined, every perfect prompt you’ve crafted, every artifact you’ve created inside Chat lives on someone else’s server. In a proprietary format. Accessible only through their interface.

Want to switch from Claude to ChatGPT because one is better for a specific task? You start from zero. Your Claude Projects don’t transfer. Your ChatGPT custom GPTs don’t export. The six months of refined context you built inside one platform stays inside that platform.

This isn’t just inconvenient. It’s strategically dangerous. You’re building your most valuable intellectual work inside a container you don’t control. If the platform changes its pricing, degrades its quality, discontinues a feature you depend on, or gets leapfrogged by a competitor, your options are: stay and accept it, or leave and lose everything you built.

The more sophisticated your use of Chat, the deeper this lock-in gets. The power user with 50 carefully constructed Projects and a library of custom instructions is the one who can least afford to leave. The tool rewards your investment by making you more dependent on it.

Your files can’t get in. Your work can’t get out. And the longer you stay, the harder it is to leave.

Chat Wall #4: Context Rot

This is the subtlest wall, and maybe the most dangerous, because you don’t notice it happening.

Imagine you’re working on an ambitious project in Chat. A long article. A detailed strategy. A complex analysis. The conversation grows to 40, 60, 80 messages. You’re deep in it. You feel like the AI is tracking everything perfectly.

It’s not.

As conversations grow, the context window fills up. Earlier instructions get pushed toward the edges of what the model attends to. The AI starts quietly losing track of things you told it 40 messages ago. Your voice standards. Your structural requirements. Specific decisions you made early in the conversation.

It doesn’t warn you that it’s forgotten. It just drifts. Confidently producing output that contradicts what you established at the start. You catch some of these contradictions. You don’t catch all of them.

This is context rot. The silent degradation of AI quality as conversations grow longer. The more ambitious your project, the worse it gets.

The cruel irony: the most sophisticated, ambitious use of Chat is exactly the use case where Chat fails most badly. It’s as if the factory got less efficient the more machines you added to the central shaft. Which is exactly what happened.

What I’m Calling This

These four walls (the Copy-Paste Tax, the Upload Wall, the Lock-In, and Context Rot) aren’t bugs. They’re not things that will be fixed in the next model update. They’re structural features of conversational AI.

Together, they form The Chat Trap.

The Chat Trap is what happens when you get really good at a tool designed for one-off exchanges and try to use it for cumulative, system-level work. The better you get, the harder you hit the ceiling. The more context you generate, the more overhead you create to manage it. The more ambitious your projects, the more the architecture fights you.

And it’s not 1 + 1 + 1 + 1 + 1. These four walls multiply each other. Context rot makes the Copy-Paste Tax worse (you’re shuttling context that may already be degraded). The Daily Reset makes the Upload Wall worse: projects preserve your source files, but not the thinking you did with them. The Lock-In makes everything worse (the deeper you go, the harder it is to try alternatives). The compound effect is an overhead burden that grows faster than your productivity.

The conventional wisdom says: “Get better at the conversation.”

What if the answer isn’t a better conversation, but an entirely different relationship with AI?

The Crossing: From Conversation to System

Technology alone is rarely enough to create significant benefits.

Georgios Petropoulos & Erik Brynjolfsson (MIT & Stanford researchers)

Here’s what I was wrong about when I first hit this ceiling. And it took me months to admit it.

There are now two fundamentally different ways to work with AI. Not two products. Two categories.

Category 1: Chat AI. You talk to AI. It responds. Each session is self-contained. You manage the context, the files, and the workflow. The AI is a conversation partner. Brilliant, but amnesiac.

Category 2: Agentic AI. You direct AI. It takes action. It reads your files locally, searches the web, creates documents, deploys multiple sub-tasks in parallel, and builds things that persist on your computer as files you own. The conversation is the interface, but the output is infrastructure. Systems that compound over time.

This is a category shift, not a feature upgrade.

Think about the difference between a calculator and a spreadsheet. A calculator answers one question at a time. You punch in numbers, get a result, write it down, clear the screen, start over. A spreadsheet is a system. Cells reference other cells, formulas update automatically, one change cascades through the entire model. Both do math. But they’re fundamentally different things.

The calculator is a tool. The spreadsheet is infrastructure.

To make the most with AI, you 1,000% need a spreadsheet. Not a calculator.

What This Actually Looks Like: A Case Study

Let me show you what actually happens when you cross from one category to the other.

This is a real thing I built in the last few months.

I’m not a programmer. I’ve never been a programmer. I don’t know Python, JavaScript, or any programming language. I’m a writer, a teacher, and someone who’s spent 25 years reading books and thinking about mental models.

Case Study: Article Factory

What Writing Was Like in the Chat Trap: Writing a big article used to take me weeks. Research across multiple domains. Synthesizing dozens of sources. Finding cross-disciplinary connections. Structuring the argument. Writing. Editing. Quality control. Each step was a separate Chat session, each starting from scratch.

What I built: A 30+ step article production system. When I give it a topic or rough idea, it automatically follows the stages below over the course of an hour:

Classifies the input and identifies research directions

Deploys four parallel research agents across different disciplines, mediums, and platforms

Pulls from relevant paradigms, mental models, historical cycles, and lenses to understand everything and put it in context.

Brainstorms dozens of ideas and narrows them down to a shortlist via an innovation tournament

Consults a panel of expert personas to pressure-test the idea I selected

Synthesizes everything into an article architecture

Writes a full 4,000-6,000-word draft following my voice guide

Runs a 12-dimensional quality audit against my specific standards

The system produces a first draft that’s deeper than what I could write alone. It’s not that the AI is smarter than me. It’s because the system applies my 25 years of accumulated expertise simultaneously, across more dimensions than I can hold in my own head at once. My mental models, my cross-domain research habits, my quality standards, my voice. All running in parallel.

What I typed: A one-paragraph description of the idea I wanted to explore.

What I got: A production system that now exists permanently. Every article I write from now on benefits from it. And it gets better every time I refine my standards, because the system updates automatically. This article is proof that it works.

Other Things I’ve Built

The article factory is just one of dozens of systems that I’ve built. Below is a sampling of 10, to give you an idea of things I’m doing in Claude Code that would’ve been impossible or impossibly difficult in just Claude chat.

Text To RSS. Turns any article into a podcast episode and puts it on an RSS feed that I can listen to on Snipd. When I come across a long article, I send it to this skill so I can consume it while walking.

Mental Model Manual Writer. Produces a 10,000-30,000-word deep-dive manual on a single mental model. I can create multiple models at once with parallel agents. So far, I have created 300+ manuals, which are used in my content creation process.

AI Second Brain Creator. I created this skill that gathers all of my notes, podcast clips, books, transcripts of YouTube videos I’ve watched or subscribed to, and articles I’ve liked, then ingests them into 5K+ interconnected atomic notes forming a navigable knowledge graph that AI can use to provide personalized responses to me to help me develop articles.

Podcast Enricher. Goes through hundreds of podcast episodes that I’ve clipped in my library, finds the corresponding YouTube video, transcribes the full episode, downloads the full video, and creates video clips to the exact video moment I highlighted. It automatically runs every day at 9:30am EST to ingest new podcast episodes that I’ve clipped.

Proposal Generator. Automatically builds sales proposals for my thought leadership consulting offers using my business positioning, models from five of my favorite sales books, prior proposal templates, and transcripts from sales calls.

Substack Scraper. Point it at any Substack publication and it pulls down every single post, saving each as its own clean document with the original link preserved.

Deep Prospect Finder. Builds a searchable database by sweeping 23+ platforms, then tiers them and writes personalized outreach angles for each one.

Second Order Show Producer. Stitches together my daily video clips created in the Podcast Enricher (see above), then has a cloned avatar of me introduce each clip and explain the second-order consequences.

Title Factory. Reads the actual performance data from my past articles and 4,000 A/B tests, generates 30 high-performing titles, then creates actual cover-image variations for the best ones.

Idea Machine. Takes a single seed idea and methodically expands it into dozens of related angles, variations, and adjacent concepts, saving each one so I can come back and mine them later. This skill was created by analyzing the transcripts of hundreds of my classes.

This is just a sampling.

Bottom line: Rather than constructing prompts that I forget to use and having to copy and paste between, I’m now building self-improving systems that chain many skills together automatically to create high-quality outputs I can immediately use that are impossible to create in chat.

The Deeper Shifts That Are Changing

It would be easy to read those examples and think: “That’s impressive but niche. It’s about writing articles.” It’s not. The article factory is just one instance of a universal principle.

Swap “article” for whatever your expertise produces:

A consultant’s strategic frameworks.

A coach’s diagnostic process.

A founder’s decision-making methodology.

An analyst’s pattern-recognition across datasets.

A researcher’s literature synthesis.

A designer’s aesthetic judgment.

Every expert has a version of the same bottleneck: deep knowledge that can only produce at the speed of one person sitting in one chair having one conversation at a time.

The specific systems I built are irrelevant. The shift underneath them isn’t.

That shift has a few dimensions worth naming, because they change more than your output. They change how expertise itself works.

#1. Your Expertise Becomes A Compounding Asset

In the Chat Trap, your AI relationship resets every day. Every session starts fresh. The brilliant conversation you had yesterday? Gone. The methodology you refined last week? Re-explain it.

In The Architect’s Paradigm, every session builds on the last. Your voice guide gets refined. Your research base grows. Your workflows get smarter. Your quality standards get more precise.

For example, every time I run a system, I have checkpoints at each step where I give feedback. After I give feedback, I say, “Update the skill based on what I said.” Now, when the system operates in the future, my feedback will already be baked in.

This is fundamentally different from the chat approach where I need to open a prompt, find the correct spot in the prompt, and then edit it in order to improve the system. That’s enough friction to cause me to improve my prompts less.

This is the difference between simple interest and compound interest. In the Chat Trap, you earn interest every day but it’s withdrawn every night. In the Architect’s Paradigm, interest earns interest. Six months from now, you don’t just have “experience with AI.” You have an intellectual infrastructure. A system that encodes decades of your expertise into operational tools that work alongside you.

Peter Drucker once wrote:

The most important contribution of management in the 20th century was the fifty-fold increase in the productivity of the manual worker.

Then he added:

The most important contribution management needs to make in the 21st century is similarly to increase the productivity of knowledge work and knowledge workers.

Think about what Drucker is really saying here. Manual workers got a 50x productivity gain. Not from working harder, but from systems. Assembly lines, standard operating procedures, quality control processes. The knowledge equivalent of those systems has never existed.

Until now.

For the first time, a knowledge worker can build operational systems around their own expertise. Systems that run, produce, and compound.

#2. You Get To Do the Part That Actually Matters

Let me be honest about something. AI doesn’t write like you. Even with a detailed voice guide, even with your best work as training data, a reader who knows what to look for can tell. The cadence is too even. The word choices are slightly off. The personality is approximated, not embodied.

The Architect’s Paradigm doesn’t solve that. What it solves is the production bottleneck that kept you from doing the part only you can do.

Before, writing a deep article meant weeks of work: researching across domains, organizing sources, building structure, writing a first draft, editing, quality-checking. Most of that work isn’t where your voice lives. Your voice lives in the last 10%. The specific analogy you choose. The sentence you cut because it’s trying too hard. The moment where you break from the structure because the idea demands it. The aside that only someone with your exact experience would think to include.

The system handles the first 90%. Research, structure, a working draft that’s directionally right but not yours yet. That’s the part that used to eat weeks. Now it takes hours.

Which means you actually have time to do the 10% that matters. The part where the work becomes unmistakably yours. Before, that 10% often got rushed or skipped entirely, because you’d already spent so long on the production layer that you were tired, behind schedule, or both.

I’ve published more of my best work in the last three months than in the previous year. Not because the AI writes like me. Because I finally have time to write like me.

#3. You Own Everything You Build

Last year, everyone was saying you could run your business with Make.com and n8n. This year, they’re saying the same thing about the next hot tool. There’s always a new platform promising to change everything.

So why is this different?

Because Make.com and n8n were integration layers plumbing between other people’s software. When the tools changed, the plumbing broke. You were building on someone else’s foundation.

Everything you build in the Architect’s Paradigm lives as plain text files on your own computer. Your methodology. Your standards. Your workflows. Stored as markdown files you own. If the tool disappeared tomorrow, you’d still have every skill, every template, every piece of intellectual infrastructure you created. You could easily use another AI model with your data.

Even if a better tool comes along next year (and one probably will), what do you think it’s going to need from you?

Your methodology, clearly articulated. Your standards, written down. Your workflows, structured so an AI can execute them.

That’s exactly what you build in this paradigm. The people who do this work now won’t start over. They’ll be the ones who adopt the next tool in an afternoon, because they’ve already done the hard part: making their expertise explicit, structured, and operational.

Everyone else will still be starting from scratch. Again.

Take Action

The factory owners who reorganized their floors around distributed motors didn’t just get more productive factories. They got factories that could do things centralized power couldn’t do at all. New products. New processes. New business models that were literally impossible under the old architecture.

The same is true here. The Architect’s Paradigm doesn’t just make your current work faster. It makes work possible that was impossible before. The kind of deep, cross-domain, systematized intellectual production that no individual could sustain alone in AI chat.

The technology is here. The architecture is ready. The only question is whether you’ll keep rearranging machines around the central shaft, or redesign the factory.

Get My Thought Leadership System Personally Installed At Your Company By Me

For the first time ever, I’m taking on a handful of one-on-one pioneering clients who are entrepreneurs and senior executives at $1M+ companies who would benefit from being one of the first people in the world to have a blockbuster thought leadership system installed for themselves, their employees, and/or their company. The result of this system is the ability to consistently publish blockbuster content across many channels in your unique voice.

If you’re interested, reply with BLOCKBUSTER in the comments or to this email, and I’ll send you more details.

APPENDIX: Responses To The Top Four Objections To The Agentic Paradigm

No big transition is clean. And pretending this solves everything would insult your intelligence. Let me address the objections I hear most often, because the best ones are worth taking seriously.

#1. “I don’t have time to learn a new tool.”

This one answers itself once you see the math clearly.

Count the hours you spend each week on overhead: re-explaining context, copy-pasting between windows, shuttling files, resolving contradictions between parallel chats, reformatting output. For most serious AI users, this is 10-15 hours per week. That overhead doesn’t shrink as you get better at Chat. It grows.

The Architect’s Paradigm doesn’t add to your workload. It eliminates the overhead that Chat created. The question isn’t whether you have time to learn to work with agentic AI. It’s how much longer you want to keep paying the Conversation Tax.

#2. “What if AI gets good enough that I won’t need systems?”

The work of making your expertise explicit and structured is the work, regardless of which tool executes it. If a future AI can read your structured methodology and run it automatically, you’ll be the person it works for. If you never structured your methodology, you’ll be the person still explaining it from scratch.

The most AI-ready thing you can do today isn’t learn a specific tool. It’s make your expertise legible to machines. That’s what this paradigm demands. And it’s what every future paradigm will demand too.

#3. “I love what I do. I’m not sure I want to become an AI architect.”

This is the most important objection because it gets at the thing people are actually afraid of. That becoming “a systems person” means becoming less of a craftsperson. That the creative work you love will become colder, more mechanical, less you.

I want to make three points that changed my mind on this.

Point #1: Even the work you love is mostly work you don’t love.

Break down any knowledge work task into its actual components and you’ll find something suprising. Most of the hours, even in work you genuinely love, are spent doing things that you don’t.

Take reading a book. Over the years I’ve read 1,000+ books, because I love reading. But, here’s a harsh truth. If you’re reading for learning, most books contain maybe 5 to 10 genuinely useful ideas for you specifically. Maybe less. Yet you spend hours searching through every page to find them. The joy is the insights. The work is the search. And most of your reading time is the search, not the insights.

Take writing an article. The ideas arrive in flashes. The polish feels like craft. But in between, you’re spending hours formatting footnotes, reorganizing sources, finding that one quote you remember but can’t locate, rewording transitions, rechecking facts. Most of your writing time is production, not creation.

Take running a coaching practice. You love the sessions. You love the breakthroughs. You tolerate the scheduling, the invoicing, the follow-up emails, the content marketing you know you should be doing but avoid. Most of your coaching time is not coaching.

When you build systems around your work, you take yourself out of the parts you never liked in the first place. The parts you tolerated because they were the price of admission to the parts you loved. The system doesn’t replace your craft. It removes the tax you’ve been paying on your craft.

This is something Boris Cherny, who leads Claude Code at Anthropic, put beautifully on Lenny Rachitsky’s podcast:

I have never enjoyed coding as much as I do today because I don’t have to deal with all the minutia.

Boris Cherny, Creator Of Claude Code

Think about what Boris is really saying. He’s not saying he codes less. He’s saying he enjoys coding more. Because the thing he loved about coding was never the minutia. It was the building. And now he gets to do more of that and less of the other.

Point #2: You’re probably wrong about how this will feel.

Here’s the thing that took me a long time to accept. We’re terrible at predicting our own emotional reactions to future experiences.

The Harvard psychologist Daniel Gilbert has spent decades studying this phenomenon. He calls it affective forecasting. And his core finding, documented across dozens of studies and summarized in his book Stumbling on Happiness, is that humans are systematically bad at predicting how future events will make us feel.

We overestimate how happy a promotion will make us. We overestimate how devastated we’ll be by a breakup. We overestimate how miserable we’d be if we had to move, change jobs, start over. We predict our future emotional states using our current ones, and we’re usually wrong.

What this means for this objection is simple. When you predict “I’d hate becoming a systems person,” you’re not accurately forecasting your future experience. You’re projecting your current feelings about a version of the thing you’ve never actually done.

I went through this exactly. And, now I love writing more than I did before. Because now I spend my time on the parts I always loved, not the parts I tolerated.

Point #3: The best creative work has always run on systems.

Pixar has the Braintrust process that pressure-tests every film through structured critique. Motown had Berry Gordy’s quality control meetings where songs had to pass a committee vote before release. Every great chef has mise en place. Ingredients prepped, tools positioned, workflow mapped before the first flame.

These systems don’t replace the creative work. They protect it by handling everything that ISN’T creative.

Building a system doesn’t mean you stop doing the work you love. It means the work you love gets more of your time, not less. The system handles the parts you tolerate. You keep the parts you chose this career for.

If Gilbert’s research is right (and it has been replicated many times), you’re probably going to end up enjoying it more than you think you will.

#4. “Can’t I just wait until this is easier?”

You can. But understand what you’re giving up.

This is the compound interest problem. Two investors with the same strategy, the same returns, but one starts five years earlier. The early investor doesn’t have a 5-year head start. They have a compounding head start. The gap between those two investors widens every year.

Every week you spend in the Architect’s Paradigm, your systems get smarter. Your intellectual infrastructure grows. Your production capacity compounds.

But every week you spend in the Chat Trap, you start from scratch.

In three years, these will be fundamentally different kinds of professionals. Not because one is smarter. Because one built infrastructure that compounds and the other kept renting a tool that resets every morning.

Blockbuster

Blockbuster